At this point in the series you should have a system capable of device assignment and properly configured to sequester at least the GPU from the host for assignment to a guest. You should already have your distribution packages installed for QEMU/KVM and libvirt, including virt-manager. In this article we'll cover installation and configuration of the guest for UEFI capable graphics card and UEFI capable guest. This is the configuration I'd recommend for most users as it's the most directly supported. Unless you absolutely cannot upgrade your graphics card or guest OS, this is the configuration most users should aim for.

I'll be using the hardware configuration discussed in part 1 of this series along with Windows 8.1 (64 bit) as my guest operating system. Both my EVGA GTX 750 and Radeon HD8570 OEM support UEFI as determined here, and I'll cover the details unique to each. Each GPU is connected via HDMI to a small LED TV, which will also be my primary target for audio output. Perhaps we'll discuss in future articles the modifications necessary for host-based audio.

The first step is to start virt-manager, which you should be able to do from your host desktop. If your host doesn't have a desktop, virt-manager can connect remotely over ssh. We'll mostly be using virt-manager for installation and setup, and maybe future maintenance. After installation you can set the VM to auto start or start and stop it with the command line virsh tools. If you've never run virt-manager before, you probably want to start with configuring your storage and networking Either by Edit->Connection Details or right click on the connection and selecting Details in the drop-down. I have a mounted filesystem setup to store all of my VM images and NFS mounts for ISO storage. You can also configure full physical devices, volume groups, iSCSI, etc. Volume groups are another favorite of mine to be able to have full flexibility and performance. libvirt's default will be to create VM images in /var/lib/libvirt/images, so it's also fine if you simply want to mount space there.

Next you'll want to click over to the Network Interfaces tab. If you want the host and guest to be able to communicate over the network, which is necessary if you want to use tools like Synergy to share a mouse and keyboard, then you'll want to create a bridge device and add your primary host network to the bridge. If host-guest communication is not important for you, you can simply use a macvtap connection when configuring the VM networking. This may be perfectly acceptable if you're creating a multi-seat system and providing mouse and keyboard via some other means (USB passthrough for host controller assignment).

Now we can create our new VM:

My ISOs are stored on an NFS mount that I already configured, so I use the Local install media option. Clicking Forward brings us to the next dialog where I select my ISO image location and select the OS type and version:

This allows libvirt to pre-configure some defaults for the VM, some of which we'll change as we move along. Stepping forward again, we can configure the VM RAM size and number of vCPUS:

For my example VM I'll use the defaults. These can be changed later if desired. Next we need to create a disk for the new VM:

The first radio button will create the disk with an automatic name in the default storage location, the second radio button allows you to name the image and specify the type. Generally I therefore always select the second option. For my VM, I've created a disk with these parameters:

In this case I've created a 50GB, sparse, raw image file. Obviously you'll need to size the disk image based on your needs. 50GB certainly doesn't leave much room for games. You can also choose whether to allocate the entire image now or let it fault in on demand. There's a little extra overhead in using a sparse image, so if space saving isn't a concern, allocate the entire disk. I would also generally only recommend a qcow format if you're looking for the space saving or snap-shotting features provided by qcow. Otherwise raw provide better performance for a disk image based VM

On the final step of the setup, we get to name our VM and take an option I generally use (and we must use for OVMF), and select to customize the VM before install:

Clicking Finish here brings up a new dialog, where in the overview we need to change our Firmware option from BIOS to UEFI:

If UEFI is not available, your libvirt and virt manager tools may be too old. Note that I'm using the default i440FX machine type, which I recommend for all Windows guests. If you absolutely must use Q35, select it here and complete the VM installation, but you'll later need to edit the XML and use a wrapper script around qemu-kvm to get a proper configuration until libvirt support for Q35 improves. Select Apply and move down to the Processor selection:

Here we can change the number of vCPUs for the guest if we've had second thoughts since our previous selection. We can also change the CPU type exposed to the guest. Often for PCI device assignment and optimal performance we'll want to use host-passthrough here, which is not available in the drop down and needs to by typed manually. This is also our opportunity to change the socket/core configuration for the VM. I'll change my configuration here to expose 4 vCPUs as a single socket, dual-core with threads, so that I can later show vCPU pinning with a thread example. There is pinning configuration available here, but I tend to configure this by editing the XML directly, which I'll show later. We can finish the install before we worry about that.

The next important option for me is the Disk configuration:

As shown here, I've changed my Disk bus from the default to VirtIO. VirtIO is a paravirtualized disk interface, which means that it's designed to be high performance for virtual machines. (EDIT: for further optimization using virtio-scsi rather than virtio-blk, see the comments below) Unfortunately Windows guests do not support VirtIO without additional drivers, so we'll need to configure the VM to provide those drivers during installation. For that, click the Add Hardware button on the bottom left of the screen. Select the Storage and just as we did with the installation media, locate the ISO image for the virtio drivers on your system. The latest virtio drivers can be found here. The dialog should look something like this:

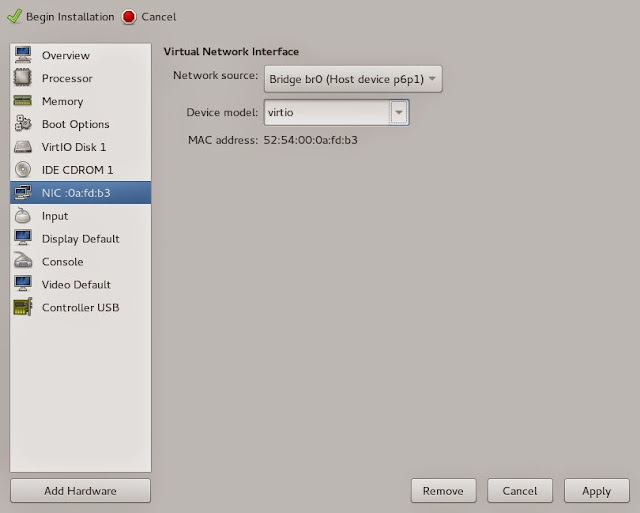

Click Finish to add the CDROM. Since we're adding virtio drivers anyway, let's also optimize our VM NIC by changing it to virtio as well:

The remaining configuration is for your personal preference. If you're using a remote system and do not have ssh authorized keys configured, I'd advise changing the Display to use VNC rather than Spice, otherwise you'll need to enter your password half a dozen times. Select Begin Installation to... begin installation.

In this configuration OVMF will drop to an EFI Shell from which you can navigate to the boot executable:

Type bootx64 and press enter to continue installation and press a key when prompted to boot from the CDROM. I expect this would normally happen automatically if we hadn't added a second CDROM for virtio drivers.

At this point the guest OS installation should proceed normally. When prompted for where to install Windows, select Load Driver, navigate to your CDROM, select Win8, AMD64 (for 64 bit drivers). You'll be given a list of several drivers to choose from. Select all of the drivers using shift-click and click Next. You should now see your disk and can proceed with installation. Generally after installation, the first thing I do is apply at least the recommended updates and reboot the VM.

Before we shutdown to reconfigure, I like to install TightVNC server so I can have remote access even if something goes wrong with the display. I generally do a custom install of TightVNC, disabling client support, and setting security appropriate to the environment. Make note of the IP address of the guest and verify the VNC connection works. Shutdown the VM and we'll trim it down, tune it further, and add a GPU.

Start with the machine details view in virt-manager and remove everything we no longer need. That includes the CDROM devices, the tablet, the display, sound, serial, spice channel, video, virtio serial, and USB redirectors. We can also remove the IDE controller altogether now. That leaves us with a minimal config that looks something like this:

We can also take this opportunity to do a little further tuning by directly editing the XML. On the host, run virsh edit <domain> as root or via sudo. Before the <os> tag, you can optionally add something like the following:

<memoryBacking>

<hugepages/>

</memoryBacking>

<cputune>

<vcpupin vcpu='0' cpuset='2'/>

<vcpupin vcpu='1' cpuset='3'/>

<vcpupin vcpu='2' cpuset='6'/>

<vcpupin vcpu='3' cpuset='7'/>

</cputune>

The <memoryBacking> tag allows us to specify huge pages for the guest. This helps improve the VM efficiency by skipping page table levels when doing address translations. In order for this to work, you must create sufficient huge pages on the host system. Personally I like to do this via kernel commandline by adding something like hugepages=2048 in /etc/sysconfig/grub and regenerating the initramfs as we did in the previous installment of this series. Most processors will only support 2MB hugepages, so by reserving 2048, we're reserving 4096MB worth of memory, which is enough for the 4GB guest I've configured here. Note that transparent huge pages are not effective for VMs making use of device assignment because all of the VM memory needs to be allocated and pinned for the IOMMU before the VM runs. This means the consolidation passes used by transparent huge pages will not be able to combine pages later.

As advertised, we're also configuring CPU pinning. Since I've advertised a single socket, dual-core, threaded processor to my guest, I pin to the same configuration on the host. Processors 2 & 3 on the host are the 2nd and 3rd cores on my quad-core processor and processors 6 & 7 are the threads corresponding to those cores. We can determine this by looking at the "core id" line in /proc/cpuinfo on the host. It generally does not make sense to expose threads to the guest unless it matches the physical host configuration, as we've configured here. Save the XML and if you've chosen to use hugepages remember to reboot the host to make the new commandline take effect or else libvirt will error starting the VM.

I'll start out assigning my Radeon HD8570 to the guest, so we don't yet need to hide any hypervisor features to make things work. Return to virt-manager and select Add Hardware. Select PCI Host Device and find your graphics card GPU function for assignment in the list. Repeat this process for the graphics card audio function. My VM details now look like this:

Start the VM. This time there will not be any console available via virt-manager, the display should initialize and you should see the TianoCore boot splash on the physical monitor connected to the graphics card as well as the Windows startup. Once the guest is booted, you can now reconnect to it using the guest-based VNC server.

At this point we can use the browser to go to amd.com and download driver software for our device. For AMD software it's recommended to specify the driver for your device using the drop down menus on the website rather than allowing the tools to select a driver for you. This holds true for updates via the runtime Catalyst interface later as well. Allowing driver detect often results in blue screens.

At some point during the download, Windows will probably figure out that its hardware changed and switch to it's builtin drivers for the device and the screen resolution will increase. This is a good sign that things are working. Run the Catalyst installation program. I generally use an Express Installation, which hopefully implies that Custom Installations will also work. After installation completes, reboot the VM and you should now have a fully functional, fully graphics accelerated VM.

The GeForce card is nearly as easy, but we first need to work around some of the roadblocks Nvidia has put in place to prevent you from using the hardware you've purchased in the way that you desire (and by my reading conforms to the EULA for their software, but IANAL). For this step we again need to run virsh edit on the VM. Within the <features> section, remove everything between the <hyperv> tags, including the tags themselves. In their place add the following tags:

<kvm>

<hidden state='on'/>

</kvm>

Additionally, within the <clock> tag, find the timer named hypervclock, remove the line containing this tag completely. Save and exit the edit session.

We can now follow the same procedure used in the above Radeon example, add the GPU and audio function to the VM, boot the VM and download drivers from nvidia.com. As with AMD, I typically use the express installation. Restart the VM and you should now have a fully accelerated Nvidia VM.

For either GPU type I highly suggest continuing with following the instructions in my article on configuring the audio device to use Message Signaled Interrupts (MSI) to improve the efficiency and avoid glitchy audio. MSI for the GPU function is typically enabled by default for AMD and not necessarily a performance gain on Nvidia due to an extra trap that Nvidia takes through QEMU to re-enable the MSI via a PCI config write.

Hopefully you now have a working VM with a GPU assigned. If you don't, please comment on what variants of the above setup you'd like to see and I'll work on future articles. I'll re-iterate that the above is my preferred and recommended setup, but VGA-mode assignment with SeaBIOS can also be quite viable provided you're not using Intel IGD graphics for the host (or you're willing to suffer through patching your host kernel for the foreseeable future). Currently on my ToDo list for this series is possibly a UEFI install of Windows 7 (if that's possible), a VGA-mode example by disabling IGD on my host, using host GTX750 and assigning HD8570. That will require a simple qemu-kvm wrapper script to insert the x-vga=on option for vfio-pci. After that I'll likely do a Q35 example with a more complicated wrapper, unless libvirt beats me to adding better native support for Q35. I will not be doing SLI/Crossfire examples as I don't have the hardware for it, there's too much proprietary black magic in SLI, and I really don't see the point of it given the performance of single card solutions today. Stay tuned for future articles and please suggest or up-vote what you'd like to see next.